The Mental Rotation Task 3D

The Mental Rotation Task 3D is a spatial cognition paradigm used to measure how individuals mentally transform objects in three dimensional space. Participants evaluate rotated stimuli and decide whether they match a reference configuration despite changes in viewpoint.

Table of Contents

Task Format | Mental Rotation Task 3D Online & In-Lab

In the Mental Rotation Task (3D Body Rotation), participants view images of an abstract human figure shown from different viewpoints and rotation angles. In this task, participants view pictures with abstract depictions of a human figure that may appear either from the front view (face visible) or from the back view (no face visible). In each picture, one limb (either an arm or a leg) will be raised. The participants are expected to decide whether the raised limb is the left limb or the right limb. Each session begins with a short practice block to familiarize participants with the decision rules before starting the main experimental trials. During the practice block, figures appear in upright positions so participants can learn the left–right response rule. In the main task, the same figures are presented at different rotation angles and viewpoints, requiring mental transformation across depth and perspective changes.

Two versions of the Mental Rotation Task (3D Body Rotation) are available, each optimized for the type of device and input method being used:

Desktop Version

In the desktop version, a body figure appears at the center of the screen on each trial. Participants press D if the raised limb is on the left side and K if it is on the right side. Practice trials use upright figures, while rotated figures are introduced only in the main task.

Mobile Version

In the mobile version, the same task structure is used. Participants respond by tapping Left or Right buttons displayed on the screen. Practice trials use upright figures, while rotated figures are introduced only in the main task.

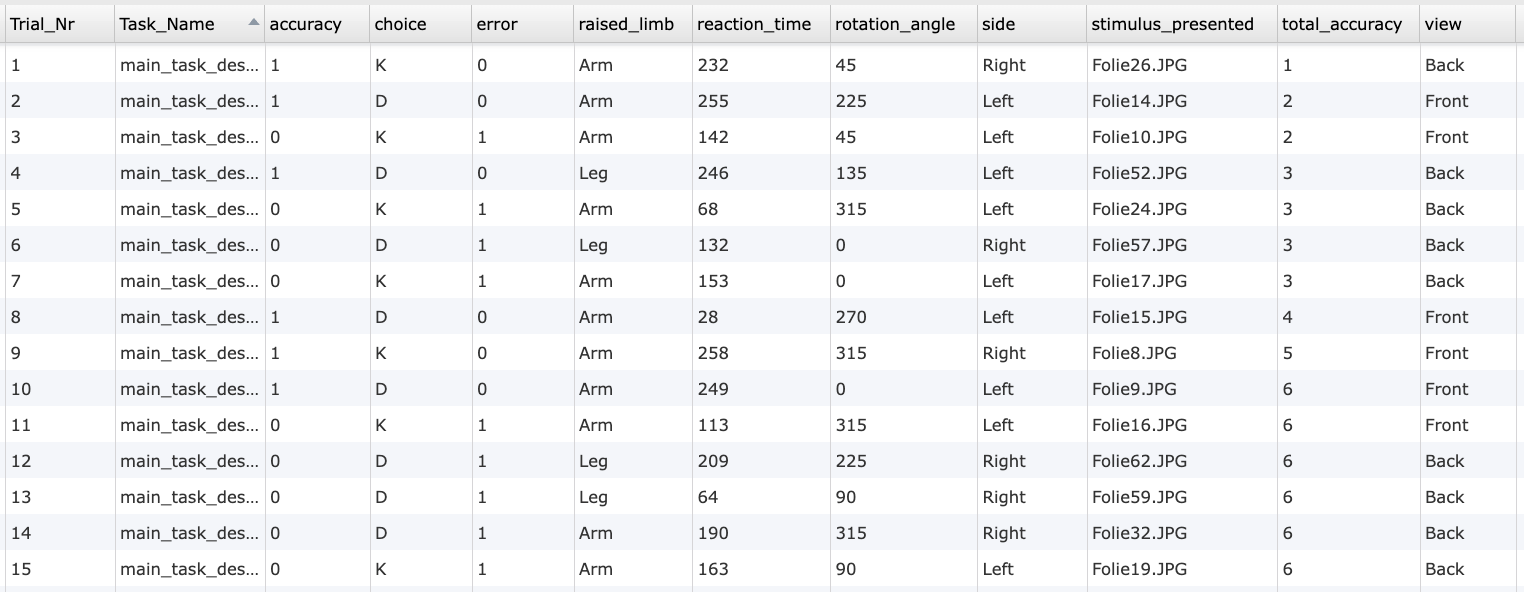

Mental Rotation Task 3D Metrics and Data Collected

The Mental Rotation Task 3D captures a range of behavioral measurements that reveal how individuals mentally manipulate and compare objects across three-dimensional space. The variables recorded ultimately enable researchers to assess reaction times, response accuracy, error patterns, and performance differences across rotation angles, viewing perspectives, and stimulus conditions (e.g., normal vs. mirrored objects). These measures help quantify visuospatial transformation ability, mental rotation efficiency, and decision-making under increased spatial complexity. All variables can be viewed and customized within the task’s Variables Tab.

Below are several of the most informative indicators that researchers frequently analyze in this version of the task:

| Variable Name | Description |

|---|---|

accuracy | Trial-level accuracy (1 = correct, 0 = incorrect) |

accuracy_total | Total number of correct responses across trials |

choice | Response given by the participant (keypress = K/D or button click= "Left” / "Right") |

error | Trial-level error (0 = correct, 1 = wrong) |

raised_limb | Specifies which limb (arm or leg) is raised in the stimulus. |

reaction_time | Time taken (in milliseconds) by the participant to respond after stimulus onset. |

rotation_angle | Degree of rotation applied to the figure during the specific trial. |

side | The raised limb for the presented stimulus (Left/ Right). |

stimulus_presented | File name of image presented as stimulus in each trial. |

view | Perspective of the figure (front / back). |

Data table showing an excerpt of individual trial-level outputs from the Mental Rotation Task (3D Body Rotation), including accuracy, participant choice, reaction time, rotation angle, stimulus side, raised limb, and presented image.

This study investigates how people mentally rotate images of human bodies. Participants view figures from different angles and must decide if a raised limb is right limb or left limb.

Technology Driving the Mental Rotation Task 3D for Online & In-Lab Research

The Mental Rotation Task 3D requires precise control over stimulus presentation, timing, and response handling. Labvanced provides flexible tools that support complex spatial task designs across different study environments.

Flexible Presentation of Three Dimensional Stimuli: Researchers can present stimuli as images or visual objects that depict different viewpoints. Orientation changes can be defined through condition logic, allowing systematic manipulation of angular disparity across trials.

Cross Device Input Support: Responses can be collected via keyboard input on desktop devices or through on screen buttons on touch enabled devices. The same task logic can be reused while adapting the interface to different hardware.

Desktop App Mode for Controlled Experiments: For studies requiring stronger control over timing or hardware integration, the task can be deployed using the Labvanced desktop app. This supports offline testing and compatibility with EEG or other LSL based systems.

Remote and Longitudinal Study Deployment: The task can be administered remotely and repeated across multiple sessions, making it suitable for studies examining spatial skill development or training effects over time.

Optional Webcam Eye Tracking Integration: Researchers can integrate webcam based eye tracking to analyze gaze behavior and perspective processing during three dimensional mental rotation trials.

Webcam Eye Tracking

Capture gaze patterns and visual attention with built-in, code-free and peer-reviewed webcam eye-tracking.

Timing Precision

Capture reaction times, task performance, and more with millisecond accuracy for time-sensitive tasks.

Desktop App

Run in-lab studies using the Desktop App, compatible with EEG and other LSL-connected lab hardware.

Customization of the Mental Rotation Task 3D

There are many ways to adapt this Mental Rotation Task 3D template to meet specific research questions. Below are a few themes researchers commonly ask when it comes to modifying this task.

Viewpoint and Rotation Conditions

Three dimensional rotation tasks often involve different viewing angles or perspective shifts. Researchers can define multiple viewpoint conditions in the Factors & Randomization and Trials & Conditions panels to control how the stimulus orientation changes across trials.

Stimulus Presentation

Different types of 3D style stimuli can be used by replacing the visual object directly in the editor. Researchers can add images using an Image Object, text using a Text Object, or other visual elements from the Objects panel, then adjust size, position, and appearance through the Object Properties panel.

Response Mapping

Keyboard keys or touchscreen buttons can be reassigned to match different judgment types. This could be achieved through simple modifications to the relevant events. Counterbalancing of response positions can be implemented through trial conditions to reduce motor bias.

Timing and Task Flow

Frame duration, stimulus timing, and response windows can be adjusted by editing frame durations or related Events. Practice blocks and experimental blocks can also be modified separately to accommodate increased difficulty often associated with 3D mental rotation.

If there is something else you’d like to know, please feel welcome to write to us and ask:

Recommended Use and Applications of the Mental Rotation Task 3D

The Mental Rotation Task 3D is widely used to investigate perspective taking and spatial transformation processes that involve depth and viewpoint changes.

Embodied Spatial Cognition Research: Researchers use this task to study how individuals mentally transform objects in three dimensional space and shift imagined viewpoints. Reaction time patterns often increase with greater angular disparity, reflecting the cognitive cost of embodied rotation.

Developmental and Individual Differences Research: The task is used to examine variability in spatial visualization ability across individuals and age groups. Three dimensional rotation tasks are particularly sensitive to differences in strategy use and spatial experience.

Applied and Training Research: Mental Rotation Task 3D paradigms are frequently used in studies exploring spatial training, simulation based learning, and skill acquisition in technical domains. Because the task requires depth processing, it is often used to examine advanced spatial reasoning.

Neurocognitive Research: The task is included in studies investigating neural mechanisms underlying perspective taking and visual imagery. Researchers often combine behavioral measures with eye tracking or neurophysiological data to examine cognitive processing stages.

References

Dahm, S. F., Muraki, E. J., & Pexman, P. M. (2022). Hand and Foot Selection in Mental Body Rotations Involves Motor-Cognitive Interactions. Brain Sciences, 12(11), 1500.

Neubauer, A. C., Bergner, S., & Schatz, M. (2010). Two- vs. three-dimensional presentation of mental rotation tasks: Sex differences and effects of training on performance and brain activation. Intelligence, 38(5), 529–539.