Preferential Looking Test

The Preferential Looking Test measures visual preference by tracking where participants direct their gaze when presented with competing stimuli. Participants view multiple stimuli simultaneously, and their looking behavior is used to infer attention and choice. It is widely used in developmental and cognitive research to study perception, learning, and attention.

Table of Contents

Task Format | Preferential Looking Test Online & In-Lab

In the Preferential Looking Test, participants are presented with two stimuli (social and non-social) displayed side by side while gaze behavior is recorded to infer visual preference. The task integrates eye tracking and head tracking for improved accuracy and data quality, along with optional webcam video recording for post-hoc analysis.

The template supports both image-based and video-based stimulus formats. The image version presents static stimuli, while the video version presents dynamic clips. Both follow the same viewing procedure and data collection pipeline.

Each session begins with a short calibration process to ensure accurate gaze tracking. An optional pre-task video call step can be included to review instructions with participants. During trials, participants view the stimuli naturally without manual responses while eye movements and webcam video are recorded. After completing the trials, a results summary is generated showing key gaze metrics.

Preferential Looking Task Metrics and Data Collected

The Preferential Looking Task captures a range of behavioral and multimodal measurements that reveal how visual attention and natural viewing behavior are distributed between social and non-social stimuli. Using predefined Areas of Interest (AOIs), researchers can quantify how long participants attend to specific regions of the screen.

The variables recorded enable researchers to assess gaze-based metrics such as fixation duration, fixation counts, and time to first fixation, alongside head tracking measures and synchronized video recordings. These measures help quantify attentional bias, visual engagement, and orientation behavior during passive viewing. All variables can be viewed and customized within the task’s Variables Tab.

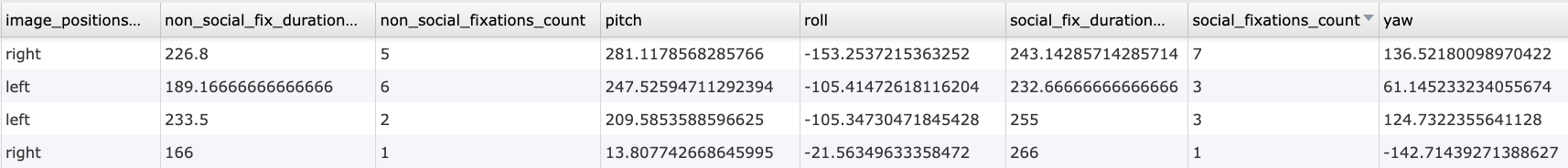

Below are examples of variables collected in the Labvanced version of the Preferential Looking Task:

| Variable Name | Description |

|---|---|

image_positions_NS | Position of the non-social stimulus (left / right) |

non_social_fix_duration_trial_mean | Total fixation duration on non-social AOI |

non_social_fixations_count | Number of fixations on non-social stimulus |

pitch | Vertical head movement (degrees) |

roll | Head tilt (degrees) |

yaw | Horizontal head movement (degrees) |

social_fix_duration_trial_mean | Total fixation duration on social AOI |

social_fixations_count | Number of fixations on social stimulus |

Data table showing an excerpt of individual trial-level outputs from the Preferential Looking Task, including fixation duration, fixation count, stimulus position, and head orientation metrics.

This study measures visual preference and attentional bias using gaze tracking. Participants view social and non-social stimuli while fixation behavior, head movement, and video recordings are captured for analysis.

Technology Driving the Preferential Looking Task for Online & In-Lab Research

Labvanced includes several technologies that make the Preferential Looking Task highly suitable for developmental, remote, and multimodal research:

Webcam Eye Tracking: Measure gaze direction, fixation duration, and attention shifts without specialized hardware.

Head Tracking Integration: Monitor participant positioning and head movement (pitch, yaw, roll) during viewing.

Attention Getter and Calibration Support: Include fixation cues and calibration steps to improve gaze accuracy, especially for infant-friendly designs.

Video Recording Object: Record participant webcam video during the task for post-hoc coding and behavioral verification.

Video Conference Object: Enable live interaction with participants to guide setup, calibration, and instructions.

Precise Stimulus Layout Control: Present side-by-side stimuli with controlled spacing, alignment, and size.

Web Based and Desktop Deployment: Run studies online or in controlled lab environments.

Remote and Longitudinal Study Support: Collect data across multiple sessions and locations using integrated tools.

Webcam Eye Tracking

Capture gaze patterns and visual attention with built-in, code-free and peer-reviewed webcam eye-tracking.

Timing Precision

Capture reaction times, task performance, and more with millisecond accuracy for time-sensitive tasks.

Desktop App

Run in-lab studies using the Desktop App, compatible with EEG and other LSL-connected lab hardware.

Customization of the Preferential Looking Task

There are many ways to adapt this Preferential Looking Task template to meet specific research questions. Below are several customization themes researchers commonly explore when modifying this task.

Stimulus Version Selection

Participants can be assigned to image-based or video-based versions. Researchers can modify which version appears and adjust instructions in the task editor.

Stimulus Content and Presentation

Side-by-side stimuli can be replaced directly using Image Object or Video Object. Properties such as size, position, and appearance can be adjusted in the Object Properties panel.

Areas of Interest and Gaze Metrics

Viewing regions are defined as Areas of Interest (AOIs). Eye tracking Events reference these regions to calculate fixation counts and durations.

Video Recording and Conference Flow

The Video Recording Object and Video Conference Object can be enabled, disabled, or adjusted to control recording and interaction stages.

Calibration and Trial Timing

Calibration procedures, viewing durations, and transitions can be modified through frame timing and Events.

If you need help customizing this task, please feel welcome to write to us and ask:

Recommended Use and Applications of the Preferential Looking Task

The Preferential Looking Task is widely used across research domains investigating perception, learning, and attention.

Developmental Research: Used to study perception and learning in infants and young children through gaze behavior.

Language and Category Learning Studies: Examines how participants associate visual stimuli with sounds or categories.

Attention and Visual Preference Research: Investigates how visual features guide attention and preference.

Clinical and Neurodevelopmental Research: Used to study atypical gaze patterns in developmental conditions.

Social and Emotional Processing Research: Examines preference for faces, expressions, and social cues.

References

Teller, D. Y. (1979). The forced choice preferential looking technique for use with human infants. Infant Behavior and Development, 2(2), 135–153.

Dubey, I., Brett, S., Ruta, L., Bishain, R., Chandran, S., Bhavnani, S., Belmonte, M. K., Estrin, G. L., Johnson, M., Gliga, T., Chakrabarti, B., & START consortium (2022). Quantifying preference for social stimuli in young children using two tasks on a mobile platform. PLoS One, 17(6), e0265587.