Emotion Detection Trigger

Table of Contents

Overview

The emotion detection trigger in Labvanced is used to automatically initiate events or record responses when a participant’s emotional state is detected. This is a key component of Labvanced's emotion detection which allows researchers to link stimuli presentation or task changes directly to real-time emotional reactions, making experiments more dynamic and enabling more precise measurement of how emotions influence behavior, attention, or decision-making.

Note: All processing occurs client-side, ensuring GDPR compliance and guaranteeing that no facial data is ever transmitted or stored externally.

Recording Emotion Detection Data

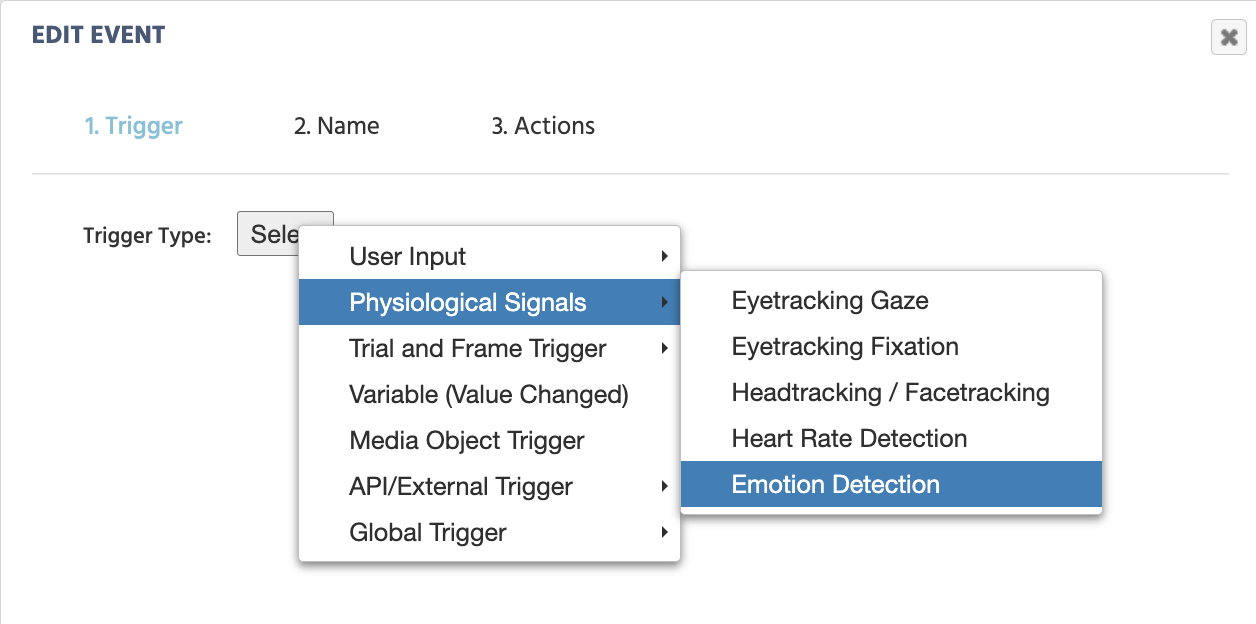

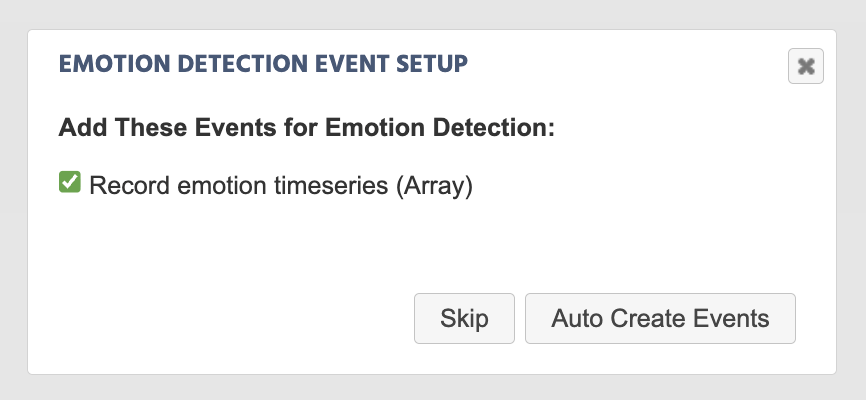

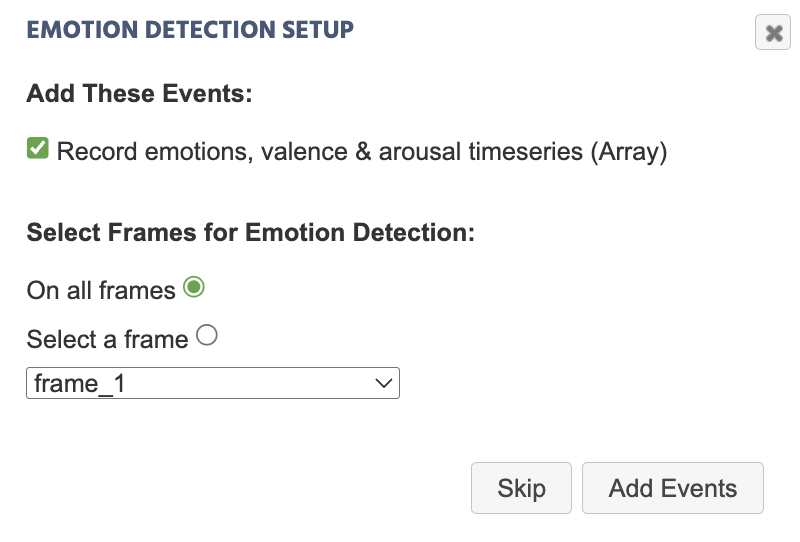

Upon selecting the trigger and giving the action a name, a dialog box will appear prompting you to set up the relevant events for recording data for emotion detection:

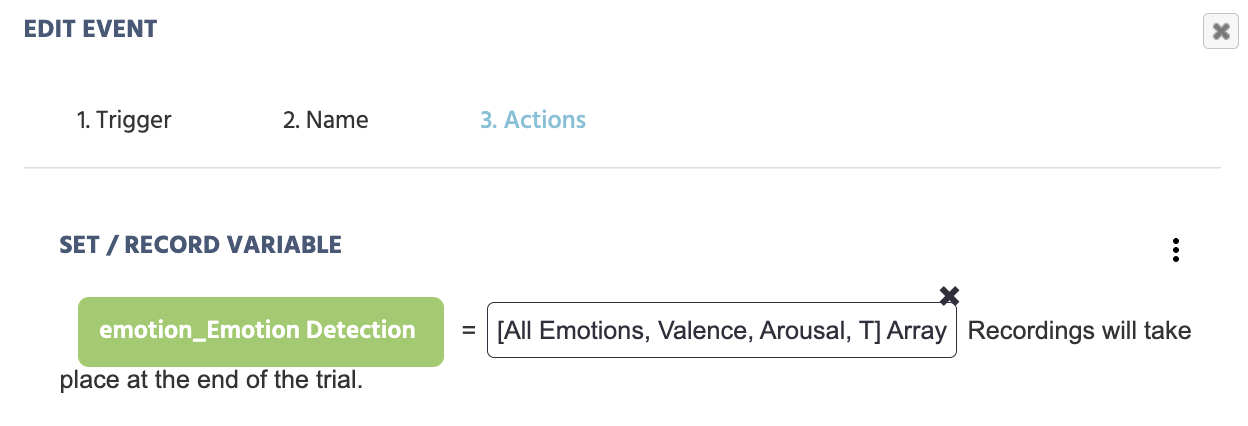

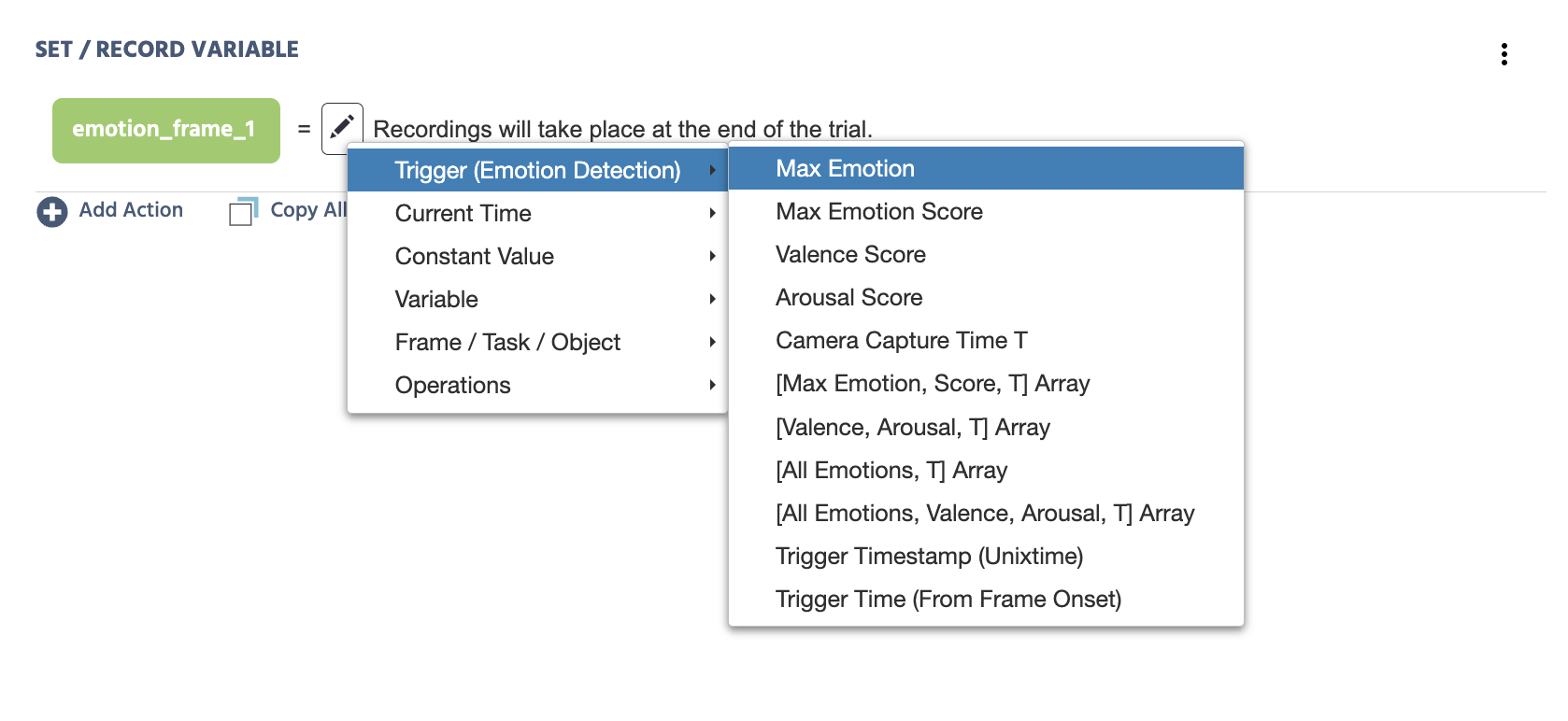

As a result of auto-creating this event, the following action will be created (as shown in the picture below). The variable name emotion_Emotion Detection is assigned and the trigger-specific value of [All Emotions, Valence, Arousal, T] Array is indicated as the values to be recorded during data collection.

From here, the variable name can be further edited via the Variables panel, and the trigger values can also be reassigned to be different values. See below for more options via the Value-select Menu.

Preview of Emotion Detection Data Collected in Labvanced

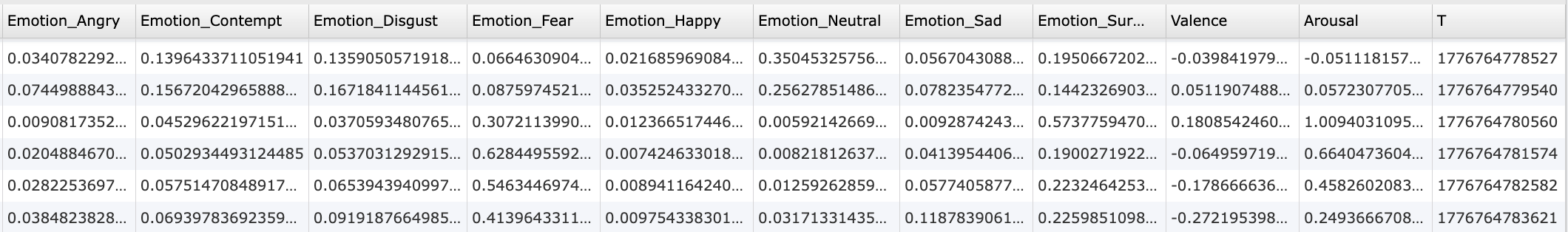

Below is an example of data recorded as a result of having the above event active in your task in Labvanced:

Enabling / Activating Emotion Detection

In order for the emotion detection trigger to function, the relevant settings must be activated and enabled under both the Task Controls and Settings, as explained below.

Study Settings - Enable Emotion Detection

In the Settings tab, navigate to Physiology → Emotion Detection and check the checkbox in order to activate emotion detection in your study.

Task Settings - Enable Emotion Detection

Under the Task Controls section in the Task Editor, navigate to the Physiological Signals tab and click the checkbox in order for emotion detection to be active in the particular task.

Upon activating the emotion detection by clicking the checkbox, a dialog box will appear prompting you to indicate whether an event for recording emotion detection data values should be created, along with what frame should the recording occur on:

Trigger-specific Values for Emotion Detection

Upon selecting the Emotion Detection Trigger, the following options are available in the Value-Select Menu.

| Value | Description |

|---|---|

Max Emotion | The maximum emotion detected. String value with the following parameters available: angry, contempt, disgust, fear, happy, neutral, sad, surprise. |

Max Emotion Score | The score of the Max Emotion detected. |

Valence Score | The numeric score for valence detection. |

Arousal Score | The numeric score for arousal detected. |

Camera Capture Time T | The adjusted timestamp value based on when the image snapshot (ie the camera capture) occurred which is required in order to perform emotion detection calculations. Note: While the Trigger Timestamp is a value of when the trigger initiated, it takes a few milliseconds for the algorithm to then capture the image frame locally and then process the emotional score. Thus, the Camera Capture Time T value is a more accurate timestamp to use. |

[Max Emotion, Score, T] Array | An array that holds the following values: Max Emotion (string label) score (numeric), Camera Capture T (Unixtime). |

[Valence, Arousal, T] Array | An array that holds the following values: Valence (numeric), Arousal (numeric), Camera Capture T (Unixtime). |

[All Emotions, T] Array | Records the scores for all 8 emotions and the Camera Capture T (Unixtime). Refer to the first 8 columns and the last column of the image preview in the data recording section above for a sense of the data collected with this trigger-specific value selected. Note: The values from the 8 emotions under All Emotions are relative to each other as the scores for all 8 emotions sum up to 1. |

[All Emotions, Valence, Arousal, T] Array | Records the numeric scores for all 8 emotions, valence, arousal, and the Camera Capture T (Unixtime). Refer to the image preview in the data recording section above for a sense of the data collected with this trigger-specific value selected. Note: The values from the 8 emotions under All Emotions are relative to each other as the scores for all 8 emotions sum up to 1. |

Trigger Timestamp (Unixtime) | The trigger timestamp in UNIXTIME. Note: Refer to the Camera Capture T value as this is a more accurate value for when the emotion detection occurred, as explained above. |

Trigger Time (From Frame Onset) | Time (in milliseconds) that the trigger occurred from the frame onset / start. |

Practical Examples Featuring the Emotion Detection Trigger

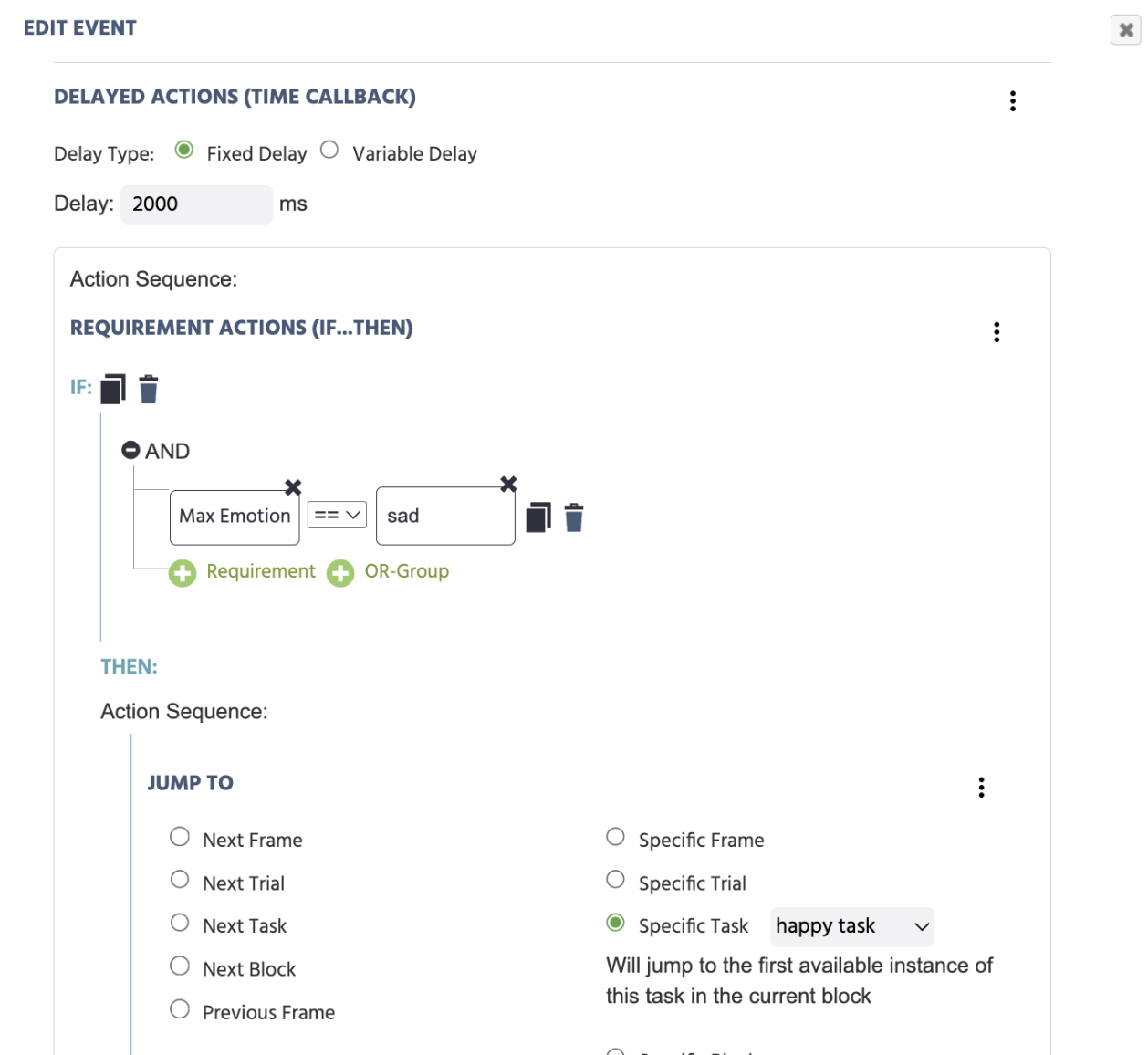

Controlling Experiment Progress Based on Emotional State

In this example, a Delayed Action contains a Requirement Action (If...Then) to specify that after a delay of 2000 milliseconds, if the Max Emotion is equal to sad to progress to Jump To a specific task.

Emotion Detection in Real Time

A series of images are presented, the participant is asked to mimic the expression. The highest emotion detected of the participant's expression is reported, along with valence and arousal values.